Sure, the gaming event is a showcase for triple-A blockbusters, but a surprising number of small, thoughtful gems piqued our interest.

Category: Tech news

hacking,system security,protection against hackers,tech-news,gadgets,gaming

12 Best Tablets for Every Budget in 2018: iPad, Android, Fire HD, Surface

No matter if you prefer Android, iOS, Chrome, or Windows—these are the best tablets we’ve tried.

How Volvo’s Making the Polestar 1 From an Old Concept

Five years after showing off the Concept Coupe, Volvo has resurrected the much-loved design as the first offering from its newly electric-focused brand, Polestar.

How Technology Helped Me Cheat Dyslexia

Innovations in brain research and AI-fueled assistive technologies could level the playing field for those with language-based learning disabilities.

The Collapse of a $40 Million Nutrition Science Crusade

The Nutrition Science Initiative promised to study obesity and diabetes the right way. Now it’s broke, president-less, and all but gone.

Fake Video Will Complicate Viral Justice

Opinion: Videos provide transformative new avenues for justice, often summoning well-deserved Twitter mobs. Deep fakes could change all that.

You’ll Have to Look Closer to Understand These Tiny Worlds

Photographer Frank Kunert photographs miniature scenes of modern life—with a bizarre twist.

‘Westworld’ Recap, Season 2 Episode 9: The Many Lives of the Man in Black

As the series’s second season wraps up, the wall between his real-life self and Westworld persona comes crashing down.

Trump’s Meeting With Kim Jong-Un Tops This Week’s Internet News Roundup

Last week, social media was awash in commentary about the historic meeting between the US president and the North Korean leader.

Elon Musk Digs Chicago, Byton’s Tesla-Fighter, and More Car News This Week

Plus: Volvo gets some lidar, Seattle’s high-tech tunnel, and Boeing tries to make the future of flight a lot more fun.

This Crazy Slide Is Either Evil or Funny

It’s like the designer *wanted* people to crash in excellent viral videos.

The Powerful Groups Stonewalling a Greener Way to Die

“Aquamation,” a greener form of body disposal, is gaining acceptance in America. But some groups are fighting to stop it.

UK report warns DeepMind Health could gain ‘excessive monopoly power’

DeepMind’s foray into digital health services continues to raise concerns. The latest worries are voiced by a panel of external reviewers appointed by the Google-owned AI company to report on its operations after its initial data-sharing arrangements with the U.K.’s National Health Service (NHS) ran into a major public controversy in 2016.

The DeepMind Health Independent Reviewers’ 2018 report flags a series of risks and concerns, as they see it, including the potential for DeepMind Health to be able to “exert excessive monopoly power” as a result of the data access and streaming infrastructure that’s bundled with provision of the Streams app — and which, contractually, positions DeepMind as the access-controlling intermediary between the structured health data and any other third parties that might, in the future, want to offer their own digital assistance solutions to the Trust.

While the underlying FHIR (aka, fast healthcare interoperability resource) deployed by DeepMind for Streams uses an open API, the contract between the company and the Royal Free Trust funnels connections via DeepMind’s own servers, and prohibits connections to other FHIR servers. A commercial structure that seemingly works against the openness and interoperability DeepMind’s co-founder Mustafa Suleyman has claimed to support.

“There are many examples in the IT arena where companies lock their customers into systems that are difficult to change or replace. Such arrangements are not in the interests of the public. And we do not want to see DeepMind Health putting itself in a position where clients, such as hospitals, find themselves forced to stay with DeepMind Health even if it is no longer financially or clinically sensible to do so; we want DeepMind Health to compete on quality and price, not by entrenching legacy position,” the reviewers write.

Though they point to DeepMind’s “stated commitment to interoperability of systems,” and “their adoption of the FHIR open API” as positive indications, writing: “This means that there is potential for many other SMEs to become involved, creating a diverse and innovative marketplace which works to the benefit of consumers, innovation and the economy.”

“We also note DeepMind Health’s intention to implement many of the features of Streams as modules which could be easily swapped, meaning that they will have to rely on being the best to stay in business,” they add.

However, stated intentions and future potentials are clearly not the same as on-the-ground reality. And, as it stands, a technically interoperable app-delivery infrastructure is being encumbered by prohibitive clauses in a commercial contract — and by a lack of regulatory pushback against such behavior.

The reviewers also raise concerns about an ongoing lack of clarity around DeepMind Health’s business model — writing: “Given the current environment, and with no clarity about DeepMind Health’s business model, people are likely to suspect that there must be an undisclosed profit motive or a hidden agenda. We do not believe this to be the case, but would urge DeepMind Health to be transparent about their business model, and their ability to stick to that without being overridden by Alphabet. For once an idea of hidden agendas is fixed in people’s mind, it is hard to shift, no matter how much a company is motivated by the public good.”

“We have had detailed conversations about DeepMind Health’s evolving thoughts in this area, and are aware that some of these questions have not yet been finalised. However, we would urge DeepMind Health to set out publicly what they are proposing,” they add.

DeepMind has suggested it wants to build healthcare AIs that are capable of charging by results. But Streams does not involve any AI. The service is also being provided to NHS Trusts for free, at least for the first five years — raising the question of how exactly the Google-owned company intends to recoup its investment.

Google of course monetizes a large suite of free-at-the-point-of-use consumer products — such as the Android mobile operating system; its cloud email service Gmail; and the YouTube video sharing platform, to name three — by harvesting people’s personal data and using that information to inform its ad targeting platforms.

Hence the reviewers’ recommendation for DeepMind to set out its thinking on its business model to avoid its intentions vis-a-vis people’s medical data being viewed with suspicion.

The company’s historical modus operandi also underlines the potential monopoly risks if DeepMind is allowed to carve out a dominant platform position in digital healthcare provision — given how effectively its parent has been able to turn a free-for-OEMs mobile OS (Android) into global smartphone market OS dominance, for example.

So, while DeepMind only has a handful of contracts with NHS Trusts for the Streams app and delivery infrastructure at this stage, the reviewers’ concerns over the risk of the company gaining “excessive monopoly power” do not seem overblown.

They are also worried about DeepMind’s ongoing vagueness about how exactly it works with its parent Alphabet, and what data could ever be transferred to the ad giant — an inevitably queasy combination when stacked against DeepMind’s handling of people’s medical records.

“To what extent can DeepMind Health insulate itself against Alphabet instructing them in the future to do something which it has promised not to do today? Or, if DeepMind Health’s current management were to leave DeepMind Health, how much could a new CEO alter what has been agreed today?” they write.

“We appreciate that DeepMind Health would continue to be bound by the legal and regulatory framework, but much of our attention is on the steps that DeepMind Health have taken to take a more ethical stance than the law requires; could this all be ended? We encourage DeepMind Health to look at ways of entrenching its separation from Alphabet and DeepMind more robustly, so that it can have enduring force to the commitments it makes.”

Responding to the report’s publication on its website, DeepMind writes that it’s “developing our longer-term business model and roadmap.”

“Rather than charging for the early stages of our work, our first priority has been to prove that our technologies can help improve patient care and reduce costs. We believe that our business model should flow from the positive impact we create, and will continue to explore outcomes-based elements so that costs are at least in part related to the benefits we deliver,” it continues.

So it has nothing to say to defuse the reviewers’ concerns about making its intentions for monetizing health data plain — beyond deploying a few choice PR soundbites.

On its links with Alphabet, DeepMind also has little to say, writing only that: “We will explore further ways to ensure there is clarity about the binding legal frameworks that govern all our NHS partnerships.”

“Trusts remain in full control of the data at all times,” it adds. “We are legally and contractually bound to only using patient data under the instructions of our partners. We will continue to make our legal agreements with Trusts publicly available to allow scrutiny of this important point.”

“There is nothing in our legal agreements with our partners that prevents them from working with any other data processor, should they wish to seek the services of another provider,” it also claims in response to additional questions we put to it.

“We hope that Streams can help unlock the next wave of innovation in the NHS. The infrastructure that powers Streams is built on state-of-the-art open and interoperable standards, known as FHIR. The FHIR standard is supported in the UK by NHS Digital, NHS England and the INTEROPen group. This should allow our partner trusts to work more easily with other developers, helping them bring many more new innovations to the clinical frontlines,” it adds in additional comments to us.

“Under our contractual agreements with relevant partner trusts, we have committed to building FHIR API infrastructure within the five year terms of the agreements.”

Asked about the progress it’s made on a technical audit infrastructure for verifying access to health data, which it announced last year, it reiterated the wording on its blog, saying: “We will remain vigilant about setting the highest possible standards of information governance. At the beginning of this year, we appointed a full time Information Governance Manager to oversee our use of data in all areas of our work. We are also continuing to build our Verifiable Data Audit and other tools to clearly show how we’re using data.”

So developments on that front look as slow as we expected.

The Google-owned U.K. AI company began its push into digital healthcare services in 2015, quietly signing an information-sharing arrangement with a London-based NHS Trust that gave it access to around 1.6 million people’s medical records for developing an alerts app for a condition called Acute Kidney Injury.

It also inked an MoU with the Trust where the pair set out their ambition to apply AI to NHS data sets. (They even went so far as to get ethical signs-off for an AI project — but have consistently claimed the Royal Free data was not fed to any AIs.)

However, the data-sharing collaboration ran into trouble in May 2016 when the scope of patient data being shared by the Royal Free with DeepMind was revealed (via investigative journalism, rather than by disclosures from the Trust or DeepMind).

None of the ~1.6 million people whose non-anonymized medical records had been passed to the Google-owned company had been informed or asked for their consent. And questions were raised about the legal basis for the data-sharing arrangement.

Last summer the U.K.’s privacy regulator concluded an investigation of the project — finding that the Royal Free NHS Trust had broken data protection rules during the app’s development.

Yet despite ethical questions and regulatory disquiet about the legality of the data sharing, the Streams project steamrollered on. And the Royal Free Trust went on to implement the app for use by clinicians in its hospitals, while DeepMind has also signed several additional contracts to deploy Streams to other NHS Trusts.

More recently, the law firm Linklaters completed an audit of the Royal Free Streams project, after being commissioned by the Trust as part of its settlement with the ICO. Though this audit only examined the current functioning of Streams. (There has been no historical audit of the lawfulness of people’s medical records being shared during the build and test phase of the project.)

Linklaters did recommend the Royal Free terminates its wider MoU with DeepMind — and the Trust has confirmed to us that it will be following the firm’s advice.

“The audit recommends we terminate the historic memorandum of understanding with DeepMind which was signed in January 2016. The MOU is no longer relevant to the partnership and we are in the process of terminating it,” a Royal Free spokesperson told us.

So DeepMind, probably the world’s most famous AI company, is in the curious position of being involved in providing digital healthcare services to U.K. hospitals that don’t actually involve any AI at all. (Though it does have some ongoing AI research projects with NHS Trusts too.)

In mid 2016, at the height of the Royal Free DeepMind data scandal — and in a bid to foster greater public trust — the company appointed the panel of external reviewers who have now produced their second report looking at how the division is operating.

And it’s fair to say that much has happened in the tech industry since the panel was appointed to further undermine public trust in tech platforms and algorithmic promises — including the ICO’s finding that the initial data-sharing arrangement between the Royal Free and DeepMind broke U.K. privacy laws.

The eight members of the panel for the 2018 report are: Martin Bromiley OBE; Elisabeth Buggins CBE; Eileen Burbidge MBE; Richard Horton; Dr. Julian Huppert; Professor Donal O’Donoghue; Matthew Taylor; and Professor Sir John Tooke.

In their latest report the external reviewers warn that the public’s view of tech giants has “shifted substantially” versus where it was even a year ago — asserting that “issues of privacy in a digital age are if anything, of greater concern.”

At the same time politicians are also gazing rather more critically on the works and social impacts of tech giants.

Although the U.K. government has also been keen to position itself as a supporter of AI, providing public funds for the sector and, in its Industrial Strategy white paper, identifying AI and data as one of four so-called “Grand Challenges” where it believes the U.K. can “lead the world for years to come” — including specifically name-checking DeepMind as one of a handful of leading-edge homegrown AI businesses for the country to be proud of.

Still, questions over how to manage and regulate public sector data and AI deployments — especially in highly sensitive areas such as healthcare — remain to be clearly addressed by the government.

Meanwhile, the encroaching ingress of digital technologies into the healthcare space — even when the techs don’t even involve any AI — are already presenting major challenges by putting pressure on existing information governance rules and structures, and raising the specter of monopolistic risk.

Asked whether it offers any guidance to NHS Trusts around digital assistance for clinicians, including specifically whether it requires multiple options be offered by different providers, the NHS’ digital services provider, NHS Digital, referred our question on to the Department of Health (DoH), saying it’s a matter of health policy.

The DoH in turn referred the question to NHS England, the executive non-departmental body which commissions contracts and sets priorities and directions for the health service in England.

And at the time of writing, we’re still waiting for a response from the steering body.

Ultimately it looks like it will be up to the health service to put in place a clear and robust structure for AI and digital decision services that fosters competition by design by baking in a requirement for Trusts to support multiple independent options when procuring apps and services.

Without that important check and balance, the risk is that platform dynamics will quickly dominate and control the emergent digital health assistance space — just as big tech has dominated consumer tech.

But publicly funded healthcare decisions and data sets should not simply be handed to the single market-dominating entity that’s willing and able to burn the most resource to own the space.

Nor should government stand by and do nothing when there’s a clear risk that a vital area of digital innovation is at risk of being closed down by a tech giant muscling in and positioning itself as a gatekeeper before others have had a chance to show what their ideas are made of, and before even a market has had the chance to form.

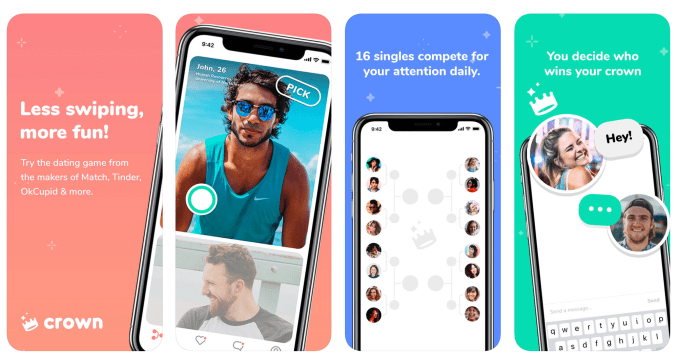

Crown, a new app from Tinder’s parent company, turns dating into a game

If you’re already resentful of online dating culture and how it turned finding companionship into a game, you may not be quite ready for this: Crown, a new dating app that actually turns getting matches into a game. Crown is the latest project to launch from Match Group, the operator of a number of dating sites and apps including Match, Tinder, Plenty of Fish, OK Cupid, and others.

The app was thought up by Match Product Manager Patricia Parker, who understands first-hand both the challenges and the benefits of online dating – Parker met her husband online, so has direct experience in the world of online dating.

Crown won Match Group’s internal “ideathon,” and was then developed in-house by a team of millennial women, with a goal of serving women’s needs in particular.

The main problem Crown is trying to solve is the cognitive overload of using dating apps. As Match Group scientific advisor Dr. Helen Fisher explained a few years ago to Wired, dating apps can become addictive because there’s so much choice.

“The more you look and look for a partner the more likely it is that you’ll end up with nobody…It’s called cognitive overload,” she had said. “There is a natural human predisposition to keep looking—to find something better. And with so many alternatives and opportunities for better mates in the online world, it’s easy to get into an addictive mode.”

Millennials are also prone to swipe fatigue, as they spend an average of 10 hours per week in dating apps, and are being warned to cut down or face burnout.

Crown’s approach to these issues is to turn getting matches into a game of sorts.

While other dating apps present you with an endless stream of people to pick from, Crown offers a more limited selection.

Every day at noon, you’re presented with 16 curated matches, picked by some mysterious algorithm. You move through the matches by choosing who you like more between two people at a time.

That is, the screen displays two photos instead of one, and you “crown” your winner. (Get it?) This process then repeats with two people shown at a time, until you reach your “Final Four.”

Those winners are then given the opportunity to chat with you, or they can choose to pass.

In addition to your own winners, you may also “win” the crown among other brackets, which gives you more matches to contend with.

Of course, getting dubbed a winner is a stronger signal on Crown than on an app like Tinder, where it’s more common for matches to not start conversations. This could encourage Crown users to chat, given they know there’s more of a genuine interest since they “beat out” several others. But on the flip side, getting passed on Crown is going to be a lot more of an obvious “no,” which could be discouraging.

“It’s like a ‘Bachelorette’-style process of elimination that helps users choose between quality over quantity,” explains Andy Chen, Vice President, Match Group. “Research shows that the human brain can only track a set number of relationships…and technology has not helped us increase this limit.”

Chen is referring to the Dunbar number, which says that people can only really maintain a max of some 150 social relationships. Giving users a never-ending list of possible matches on Tinder, then, isn’t helping people feel like they have options – it’s overloading the brain.

While turning matchmaking into a game feels a bit dehumanizing – maybe even more so than on Tinder, with its Hot-or-Not-inspired vibe – the team says Crown actually increases the odds, on average, of someone being selected, compared with traditional dating apps.

“When choosing one person over another, there is always a winner. The experience actually encourages a user playing the game to find reasons to say yes,” says Chen.

Crown has been live in a limited beta for a few months, but is now officially launched in L.A. (how appropriate) with more cities to come. For now, users outside L.A. will be matched with those closet to them.

There are today several thousand users on the app, and it’s organically growing, Chen says.

Plus, Crown is seeing day-over-day retention rates which are “already as strong” as Match Group’s other apps, we’re told.

Sigh.

The app is a free download on iOS only for now. An Android version is coming, the website says.

Teaching computers to plan for the future

As humans, we’ve gotten pretty good at shaping the world around us. We can choose the molecular design of our fruits and vegetables, travel faster and farther and stave off life-threatening diseases with personalized medical care. However, what continues to elude our molding grasp is the airy notion of “time” — how to see further than our present moment, and ultimately how to make the most of it. As it turns out, robots might be the ones that can answer this question.

Computer scientists from the University of Bonn in Germany wrote this week that they were able to design a software that could predict a sequence of events up to five minutes in the future with accuracy between 15 and 40 percent. These values might not seem like much on paper, but researcher Dr. Juergen Gall says it represents a step toward a new area of machine learning that goes beyond single-step prediction.

Although Gall’s goal of teaching a system how to understand a sequence of events is not new (after all, this is a primary focus of the fields of machine learning and computer vision), it is unique in its approach. Thus far, research in these fields has focused on the interpretation of a current action or the prediction of an anticipated next action. This was seen recently in the news when a paper from Stanford AI researchers reported designing an algorithm that could achieve up to 90 percent accuracy in its predictions regarding end-of-life care.

When researchers provided the algorithm with data from more than two million palliative-care patient records, it was able to analyze patterns in the data and predict when the patient would pass with high levels of accuracy. However, unlike Gall’s research, this algorithm focused on a retrospective, single prediction.

Accuracy itself is a contested question in the field of machine learning. While it appears impressive on paper to report accuracies ranging upwards of 90 percent, there is debate about the over-inflation of these values through cherry-picking “successful” data in a process called p-hacking.

In their experiment, Gall and his team used hours of video data demonstrating different cooking actions (e.g. frying an egg or tossing a salad) and presented the software with only portions of the action and tasked it with predicting the remaining sequence based on what it had “learned.” Through their approach, Gall hopes the field can take a step closer to true human-machine symbiosis.

“[In the industry] people talk about human robot collaboration but in the end there’s still a separation; they’re not really working close together,” says Gall.

Instead of only reacting or anticipating, Gall proposes that, with a proper hardware body, this software could help human workers in industrial settings by intuitively knowing the task and helping them complete it. Even more, Gall sees a purpose for this technology in a domestic setting, as well.

“There are many older people and there’s efforts to have this kind of robot for care at home,” says Gall. “In ten years I’m very convinced that service robots [will] support care at home for the elderly.”

The number of Americans over the age of 65 today is approximately 46 million, according to a Population Reference Bureau report, and is predicted to double by the year 2060. Of that population, roughly 1.4 million live in nursing homes according to a 2014 CDC report. The impact that an intuitive software like Gall’s could have has been explored in Japan, where just over one-fourth of the country’s population is elderly. From PARO, a soft, robotic therapy seal, to the sleek companion robot Pepper from SoftBank Robotics, Japan is beginning to embrace the calm, nurturing assistance of these machines.

With this advance in technology for the elderly also comes the bitter taste that perhaps these technologies will only create further divide between the generations — outsourcing love and care to a machine. For a yet mature industry it’s hard to say where this path with conclude, but ultimately that is in the hands of developers to decide, not the software or robots they develop. These machines may be getting better at predicting the future, but even to them their fates are still being coded.