Dave Davis joined

Copyright Clearance Center in 1994 and currently serves as a research analyst. He previously held directorships in both public libraries and corporate libraries and earned joint master’s degrees in Library and Information Sciences and Medieval European History from Catholic University of America.

More posts by this contributor

Back in May, as part of a settlement, Spotify agreed to pay more than $112 million to clean up some copyright problems. Even for a service with millions of users, that had to leave a mark. No one wants to be dragged into court all the time, not even bold, disruptive technology start-ups.

On October 11th, the President signed the Hatch-Goodlatte Music Modernization Act (the “Act”, or “MMA”). The MMA goes back, legislatively, to at least 2013, when Chairman Goodlatte (R-VA) announced that, as Chairman of the House Judiciary Committee, he planned to conduct a “comprehensive” review of issues in US copyright law. Ranking Member Jerry Nadler (D-NY) was also deeply involved in this process, as were Senators Hatch (R-UT) Leahy (D-VT), and Wyden (D-OR). But this legislation didn’t fall from the sky; far from it.

After many hearings, several “roadshow” panels around the country, and a couple of elections, in early 2018 Goodlatte announced his intent to move forward on addressing several looming issues in music copyright before his planned retirement from Congress at the end of his current term (January 2019). With that deadline in place, the push was on, and through the spring and summer, the House Judiciary Committee and their colleagues in the Senate worked to complete the text of the legislation and move it through to process. By late September, the House and Senate versions had been reconciled and the bill moved to the President’s desk.

What’s all this about streaming?

As enacted, the Act instantiates several changes to music copyright in the US, especially as regards streaming music services. What does “streaming” refer to in this context? Basically, it occurs when a provider makes music available to listeners, over the internet, without creating a downloadable or storable copy: “Streaming differs from downloads in that no copy of the music is saved to your hard drive.”

“It’s all about the Benjamins.”

One part, by far the largest change in terms of money, provides that a new royalty regime be created for digital streaming of musical works, e.g. by services like Spotify and Apple Music. Pre-1972 recordings — and the creators involved in making them (including, for the first time, for audio engineers, studio mixers and record producers) — are also brought under this royalty umbrella.

These are significant, generally beneficial results for a piece of legislation. But to make this revenue bounty fully effective, a to-be-created licensing entity will have to be set up with the ability to first collect, and then distribute, the money. Think “ASCAP/BMI for streaming.” This new non-profit will be the first such “collective licensing” copyright organization set up in the US in quite some time.

Collective Licensing: It’s not “Money for Nothing”, right?

What do we mean by “collective licensing” in this context, and how will this new organization be created and organized to engage in it? Collective licensing is primarily an economically efficient mechanism for (A) gathering up monies due for certain uses of works under copyright– in this case, digital streaming of musical recordings, and (B) distributing the royalty checks back to the rights-holding parties ( e.g. recording artists, their estates in some cases, and record labels). Generally speaking, in collective licensing:

“…rights holders collect money that would otherwise be in tiny little bits that they could not afford to collect, and in that way they are able to protect their copyright rights. On the flip side, substantial users of lots of other people’s copyrighted materials are prepared to pay for it, as long as the transaction costs are not extreme.”

—Fred Haber, VP and Corporate Counsel, Copyright Clearance Center

The Act envisions the new organization as setting up and implementing a new, extensive —and, publicly accessible —database of musical works and the rights attached to them. Nothing quite like this is currently available, although resources like SONY’s Gracenote suggest a good start along those lines. After it is set up and the initial database has a sufficient number of records, the new collective licensing agency will then get down to the business of offering licenses:

“…a blanket statutory license administered by a nonprofit mechanical licensing collective. This collective will collect and distribute royalties, work to identify songs and their owners for payment, and maintain a comprehensive, publicly accessible database for music ownership information.”

— Regan A. Smith, General Counsel and Associate Register of Copyrights

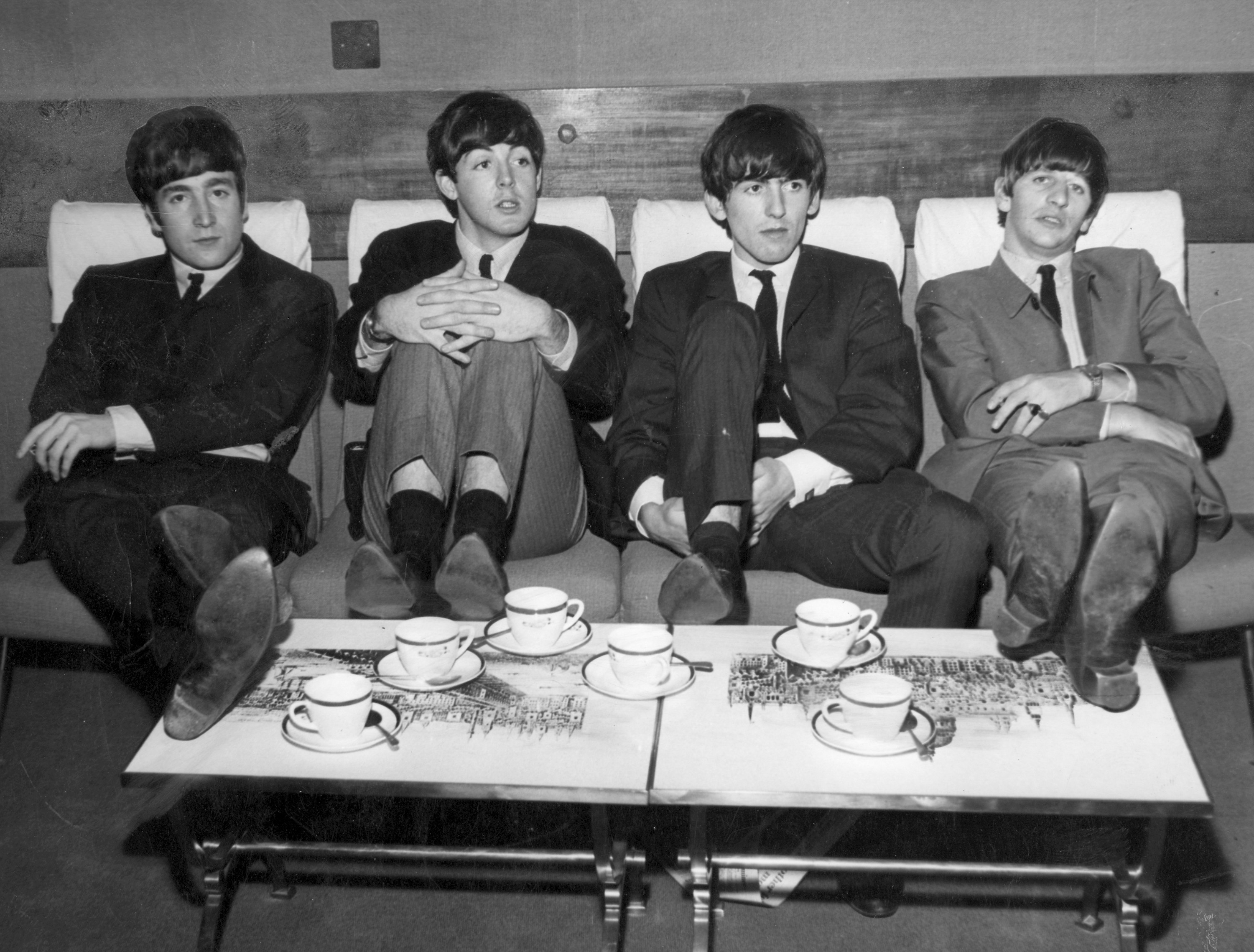

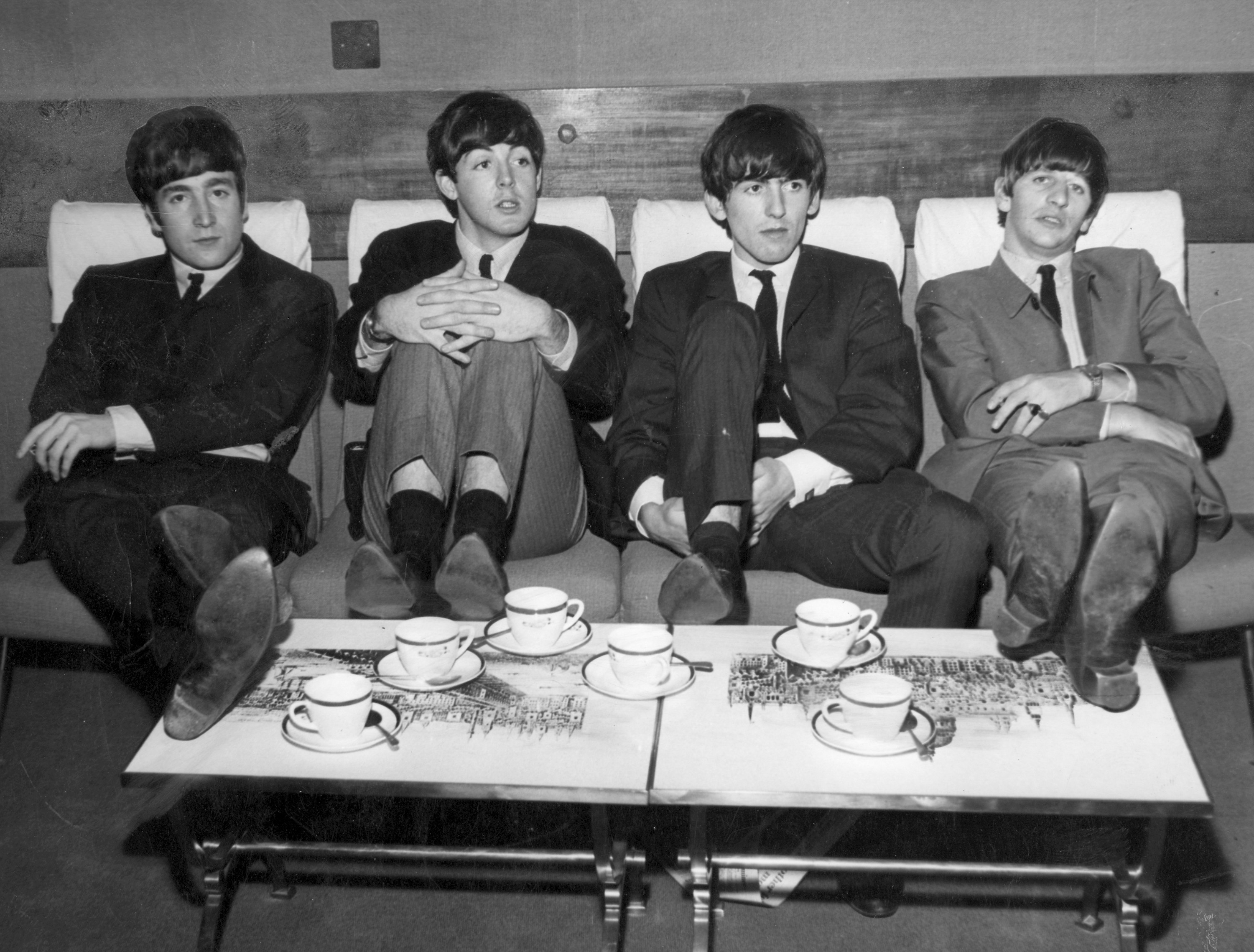

(AP Photo) The Liverpool beat group The Beatles, with John Lennon, Paul McCartney, George Harrison and Ringo Starr, take it easy resting their feet on a table, during a break in rehearsals for the Royal variety show at the Prince of Wales Theater, London, England, November 4, 1963. (AP Photo)

You “Can’t Buy Me Love”, so who is all this going to benefit?

In theory, the listening public should be the primary beneficiary. More music available through digital streaming services means more exposure —and potentially more money —for recording artists. For students of music, the new database of recorded works and licenses will serve to clarify who is (or was) responsible for what. Another public benefit will be fewer actions on digital streaming issues clogging up the courts.

There’s an interesting wrinkle in the Act providing for the otherwise authorized use of “orphaned” musical works such that these can now be played in library or archival (i.e. non-profit) contexts. “Orphan works” are those which may still protected under copyright, but for which the legitimate rights holders are unknown, and, sometimes, undiscoverable. This is the first implementation of orphan works authorization in US copyright law. Cultural services – like Open Culture – can look forward to being able to stream more musical works without incurring risk or hindrance (provided that the proper forms are filled out) and this implies that some great music is now more likely to find new audiences and thereby be preserved for posterity. Even the Electronic Frontier Foundation (EFF), generally no great fan of new copyright legislation, finds something to like in the Act.

In the land of copyright wonks, and in another line of infringement suits, this resolution of the copyright status of musical recordings released before 1972 seems, in my opinion, fair and workable. In order to accomplish that, the Act also had to address the matter of the duration of these new copyright protections, which is always (post-1998) a touchy subject:

- For recordings first published before 1923, the additional time period ends on December 31, 2021.

- For recordings created between 1923-1946, the additional time period is 5 years after the general 95-year term.

- For recordings created between 1947-1956, the additional time period is 15 years after the general 95-year term.

- For works first published between 1957-February 15, 1972 the additional time period ends on February 15, 2067.

(Source: US Copyright Office)

(Photo by Theo Wargo/Getty Images for Live Nation)

Money (That’s What I Want – and lots and lots of listeners, too.)

For the digital music services themselves, this statutory or ‘blanket’ license arrangement should mean fewer infringement actions being brought; this might even help their prospects for investment and encourage new and more innovative services to come into the mix.

“And, in The End…”

This new legislation, now the law of the land, extends the history of American copyright law in new and substantial ways. Its actual implementation is only now beginning. Although five years might seem like a lifetime in popular culture, in politics it amounts to several eons. And let’s not lose sight of the fact that the industry got over its perceived short-term self-interests enough, this time, to agree to support something that Congress could pass. That’s rare enough to take note of and applaud.

This law lacks perfection, as all laws do. The licensing regime it envisions will not satisfy everyone, but every constituent, every stakeholder, got something. From the perspective of right now, chances seem good that, a few years from now, the achievement of the Hatch-Goodlatte Music Modernization Act will be viewed as a net positive for creators of music, for the distributors of music, for scholars, fans of ‘open culture’, and for the listening public. In copyright, you can’t do better than that.