Berlin-based Zizoo — a startup which self describes as booking.com for boats — has nabbed a €6.5 million (~$7.4M) Series A to help more millennials find holiday yachts to mess about taking selfies in.

Zizoo says its Series A — which was led by Revo Capital, with participation from new investors including Coparion, Check24 Ventures and PUSH Ventures — was “significantly oversubscribed”.

Existing investors including MairDumont Ventures, aws Founders Fund, Axel Springer Digital Ventures and Russmedia International also participated in the round.

We first came across Zizoo some three years ago when they won our pitching competition in Budapest.

We’re happy to say they’ve come a long way since, with a team that’s now 60-people strong, and business relationships with ~1,500 charter companies — serving up more than 21,000 boats for rent, across 30 countries, via a search and book platform that caters to a full range of “sailing experiences”, from experienced sailor to novice and, on the pricing front, luxury to budget.

Registered users passed the 100,000 mark this year, according to founder and CEO Anna Banicevic. She also tells us that revenue growth has been 2.5x year-on-year for the past three years.

Commenting on the Series A in a statement, Revo Capital’s managing director Cenk Bayrakdar said: “The yacht charter market is one of the most underserved verticals in the travel industry despite its huge potential. We believe in Zizoo’s successful future as a leading SaaS-enabled marketplace.”

The new funds will be put towards growing the business — including by expanding into new markets; plus product development and recruitment across the board.

Zizoo founder and CEO Anna Banicevic at its Berlin offices

“We’re looking to strengthen our presence in the US, where we’ve seen the biggest YoY growth while also expand our inventory in hot locations such as Greece, Spain and the Caribbean,” says Banicevic on market expansion. “We will also be aggressively pushing markets such as France and Spain where consumers show a growing interest in boat holidays.”

Zizoo is intending to hire 40 more employees over the course of the next year — to meet what it dubs “the booming demand for sailing experiences, especially among millennials”.

So why do millennials love boating holidays so much? Zizoo says the 20-40 age range makes up the “majority” of its customer.

Banicevic reckons the answer is they’re after a slice of ‘affordable luxury’.

“After the recent boom of the cruising industry, millennials are well familiar with the concept of holidays at sea. However, sailing holidays (yachting) are much more fitting to the millennial’s strive for independence, adventure and experiences off the beaten path,” she suggests.

“Yachting is a growing trend no longer reserved for the rich and famous — and millennials want a piece of that. On our platform, users can book a boat holiday for as low as £25 per person per night (this is an example of a sailboat in Croatia).”

On the competition front, she says the main competition is the offline sphere (“where 90% of business is conducted by a few large and many small travel agents”).

But a few rival platforms have emerged “in the last few years” — and here she reckons Zizoo has managed to outgrow the startup competition “thanks to our unique vertically integrated business model, offering suppliers a booking management system and making it easy for the user to book a boat holiday”.

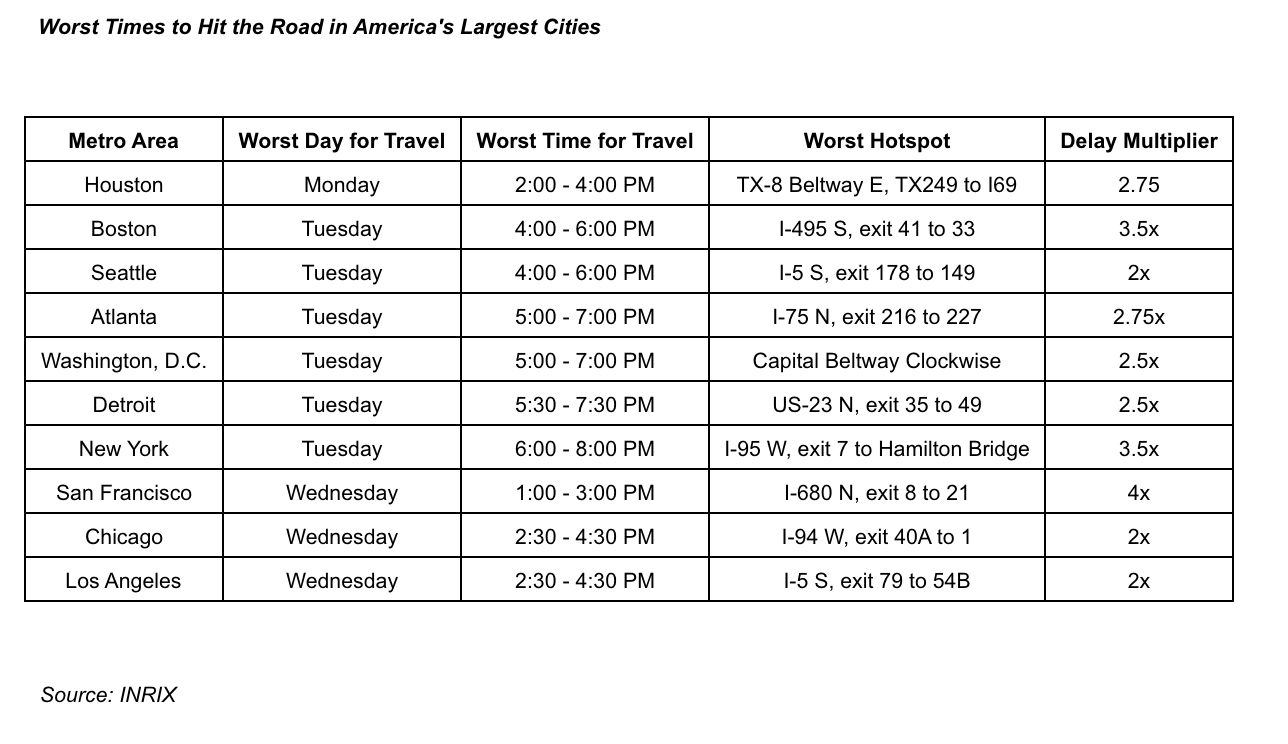

Even travel times to airports have increased Wednesday. Travel times from downtown Seattle to the airport via Interstate 5 south and Chicago to O’Hare Airport via the Kennedy Expressway will be particularly long. The Chicago route, for instance, is projected to take 1 hour and 27 minutes at the peak time between 1:30 pm and 3:30 pm CT.

Even travel times to airports have increased Wednesday. Travel times from downtown Seattle to the airport via Interstate 5 south and Chicago to O’Hare Airport via the Kennedy Expressway will be particularly long. The Chicago route, for instance, is projected to take 1 hour and 27 minutes at the peak time between 1:30 pm and 3:30 pm CT.